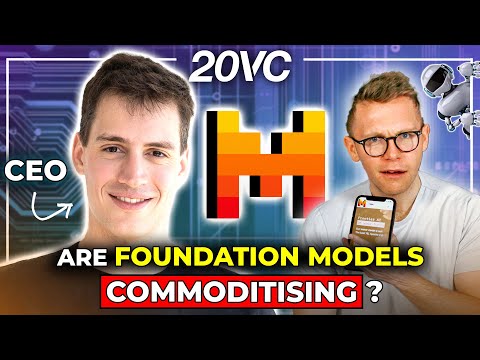

Arthur Mensch: Open vs Closed - Who Wins and Mistral's Position | E1146

Harry Stebbings (host), Arthur Mensch (guest), Narrator

In this episode of The Twenty Minute VC, featuring Harry Stebbings and Arthur Mensch, Arthur Mensch: Open vs Closed - Who Wins and Mistral's Position | E1146 explores mistral CEO on Efficient AI, Open Source, and Europe’s Bet Arthur Mensch, co-founder and CEO of Mistral, explains how the company competes in foundational AI models by focusing on efficiency, compression, and open-source distribution rather than sheer scale of compute.

Mistral CEO on Efficient AI, Open Source, and Europe’s Bet

Arthur Mensch, co-founder and CEO of Mistral, explains how the company competes in foundational AI models by focusing on efficiency, compression, and open-source distribution rather than sheer scale of compute.

He argues that while large general-purpose models will remain central, real differentiation and value will accrue in customization platforms and vertical applications built by developers and enterprises.

Mensch discusses constraints in compute, data quality, and evaluation, as well as the importance of brand, cloud partnerships, and governance in building a durable AI business.

He also reflects on Europe’s opportunity in AI, capital and talent dynamics, and his own learning curve scaling from researcher to CEO of a fast-growing, globally ambitious company.

Key Takeaways

Efficiency and compression can offset smaller compute budgets.

Mensch emphasizes that algorithmic improvements and model compression (e. ...

Get the full analysis with uListen AI

General models will be foundations; real differentiation comes from customization.

He predicts that generic LLMs will be starting points, while value moves into platforms and tools that let developers and enterprises create specialized, low-latency models tuned to their own data and use cases.

Get the full analysis with uListen AI

Data quality and evaluation, not just compute, now bottleneck progress.

For text-based models, Mensch argues that high-quality, task-specific data and precise evaluation frameworks (e. ...

Get the full analysis with uListen AI

Open source builds brand, trust, and demand that drive distribution.

By releasing strong open models, Mistral created developer mindshare and a trusted brand, which then supports enterprise adoption and platform revenue—even while selectively licensing some larger, closed models.

Get the full analysis with uListen AI

Developer priorities are cost, customization, and deployment flexibility.

Mensch notes that AI builders care less about hype benchmarks and more about unit cost, the ability to deeply customize models without PhD-level expertise, and being able to deploy on any cloud, on-prem, or edge environment with strong data control.

Get the full analysis with uListen AI

Cloud and hardware partnerships are strategically critical, not optional.

Given the capital intensity and GPU bottlenecks, tight relationships with NVIDIA and major cloud providers both unlock compute and provide the distribution channels enterprises already trust and buy through.

Get the full analysis with uListen AI

Europe has real AI potential but lacks growth capital depth.

Mensch believes Europe has top-tier technical talent and market opportunity, but is constrained by a younger, thinner growth-stage VC ecosystem; political will and a few breakout successes could change the trajectory over time.

Get the full analysis with uListen AI

Notable Quotes

“A team of five is faster than a team of fifty, unless you organize the fifty into ten teams of five that are sufficiently uncoupled.”

— Arthur Mensch

“General-purpose models are going to be a starting point for any AI application developer; the differentiation comes from the data you put into it and the user feedback you gather.”

— Arthur Mensch

“Brand seems to be critical. People use certain models because they are known to be good—you can’t afford to evaluate everything out there.”

— Arthur Mensch

“Usually, the value tends to accrue where most of the difficult part is and most of the defensibility is.”

— Arthur Mensch

“We are just trying to move humanity to a higher level of abstraction so we can now talk to machines and machines can understand and answer in a human-like fashion.”

— Arthur Mensch

Questions Answered in This Episode

How far can efficiency and compression realistically go before scale again becomes the dominant advantage in foundational models?

Arthur Mensch, co-founder and CEO of Mistral, explains how the company competes in foundational AI models by focusing on efficiency, compression, and open-source distribution rather than sheer scale of compute.

Get the full analysis with uListen AI

What kinds of tooling and abstractions are needed to let non-expert developers safely and effectively customize powerful models for narrow, high-stakes domains?

He argues that while large general-purpose models will remain central, real differentiation and value will accrue in customization platforms and vertical applications built by developers and enterprises.

Get the full analysis with uListen AI

How should enterprises think about balancing open-source flexibility and transparency with the perceived safety and simplicity of closed, cloud-native AI offerings?

Mensch discusses constraints in compute, data quality, and evaluation, as well as the importance of brand, cloud partnerships, and governance in building a durable AI business.

Get the full analysis with uListen AI

If data quality and evaluation are now the main bottlenecks, who is best positioned to build and own the critical datasets and benchmarks that matter?

He also reflects on Europe’s opportunity in AI, capital and talent dynamics, and his own learning curve scaling from researcher to CEO of a fast-growing, globally ambitious company.

Get the full analysis with uListen AI

What specific policy or capital-market changes would most accelerate Europe’s ability to sustain globally competitive AI companies like Mistral?

Get the full analysis with uListen AI

Transcript Preview

Do you feel like you have enough cash now? (cash register dings)

Uh, I guess a startup is always fundraising.

(laughs) What are the biggest barriers to Mistral today?

We are still bottlenecked by compute for sure, but that's because we don't have many of it. We have 1.5 k800, which is a few percent off our competitors.

Was it a mistake for you to not scale that quicker?

I mean, you can't really scale that much quicker. You can't raise, like, two billion on the seed round. I mean, at least you couldn't in 2023.

Ready to go? (instrumental music plays) (mouse clicking) Arthur, I am so excited for this. JC introduced us quite a long time ago now. I've known you for a while. I've been wanting to make this happen for a while. So thank you so much for joining me today.

Thank you for having me. Um, it's a pleasure.

Ah, the pleasure is mine, my friend. But I wanna start, what would your, or how would your parents or teachers have described the young Arthur? I'm just always intrigued by the characteristics and traits of the best founders. How would they have described a nine, 10-year-old Arthur?

I guess I was a bit curious and a bit stubborn, uh, I should say. Uh, and, uh, uh, not very nice (laughs) to my, to my brothers, I think. But, uh, that, that improved over time, uh, uh, was the eldest of, of them also. I don't know. You should ask them, uh, but it's, uh, I don't know, I think they, they have good, uh, good memories hopefully.

Do you know what? Sadly, your mother wasn't in our reference list, so we missed that one out, but, uh- (laughs)

Yes. Okay.

... you know, J- JC provided some great commentary. So I do wanna start though also, you know, growing up, what was your first exposure to AI? You're a kid in France, how did you first get exposed to AI and machine learning, and what was that first passion point?

I think that was Andrew Ng flying a helicopter, an helicopter backward. Uh, it was, uh, flipped around, and, uh, it's a, it's a control problem which is not easy to solve, and I'm not sure if it was really AI related. I think he was saying that it, he was using an R network to control all of this. But, um, that's the latest I remember of, uh, well, the first memory of, uh, me being, uh, shown what you could do with, uh, machine learning at the time. Uh, and so that, that was in 20- 2013, I think.

(laughs) M- most recently though you spent, you had two and a half years, three years at DeepMind. Can I ask, what are the biggest takeaways for you from that experience and how did they impact how you think about building Mistral?

Install uListen to search the full transcript and get AI-powered insights

Get Full TranscriptGet more from every podcast

AI summaries, searchable transcripts, and fact-checking. Free forever.

Add to Chrome