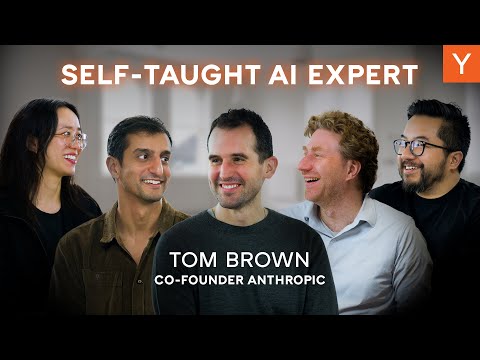

Anthropic Co-founder: Building Claude Code, Lessons From GPT-3 & LLM System Design

Tom Brown (guest), Garry Tan (host), Jared Friedman (host)

In this episode of Y Combinator, featuring Tom Brown and Garry Tan, Anthropic Co-founder: Building Claude Code, Lessons From GPT-3 & LLM System Design explores anthropic Co-founder on Scaling AI, Claude Code, and Startup Grit Tom Brown, Anthropic co-founder and former OpenAI/GPT-3 engineer, traces his path from early YC startups through OpenAI and Google Brain to co-founding Anthropic with a mission-focused team. He explains how scaling laws and infrastructure choices around GPUs, TPUs, and Trainium shaped GPT-3 and Anthropic’s current systems, and why compute is driving humanity’s largest-ever infrastructure build-out.

Anthropic Co-founder on Scaling AI, Claude Code, and Startup Grit

Tom Brown, Anthropic co-founder and former OpenAI/GPT-3 engineer, traces his path from early YC startups through OpenAI and Google Brain to co-founding Anthropic with a mission-focused team. He explains how scaling laws and infrastructure choices around GPUs, TPUs, and Trainium shaped GPT-3 and Anthropic’s current systems, and why compute is driving humanity’s largest-ever infrastructure build-out.

The conversation dives into Anthropic’s early days, the uncertainty around products, and how Claude 3.5’s unexpectedly strong coding performance and the launch of Claude Code became turning points for the company. Brown highlights their philosophy of not “teaching to the test,” focusing on internal evals, dogfooding, and building tools where Claude itself is treated as a core “user.”

He also discusses competitive dynamics with startups, the importance of mission-aligned culture, and the massive constraints emerging around power, hardware, and data centers.

For younger engineers, Brown emphasizes taking more risks, optimizing for work that an idealized version of themselves would be proud of, and not over-indexing on traditional credentials.

Key Takeaways

Treat uncertainty in early careers as a training ground for initiative, not a deficit.

Brown contrasts big-tech roles with early startups as the place he learned to stop waiting for tasks and instead adopt a ‘wolf’ mindset—taking ownership for finding and creating work, which later enabled him to tackle ambitious AI projects.

Get the full analysis with uListen

Scaling laws plus better algorithms made massive AI progress predictable years in advance.

Seeing a near-straight scaling curve over ~12 orders of magnitude convinced Brown and colleagues to pivot fully into scaling, anticipating that more compute applied with the right recipe would reliably increase capability.

Get the full analysis with uListen

Infrastructure decisions (chips, frameworks, and software stacks) are strategic leverage points.

The move from TPUs/TensorFlow to GPUs/PyTorch at OpenAI sped iteration and enabled GPT-3’s scale; Anthropic now deliberately runs on GPUs, TPUs, and Trainium to absorb capacity and match the right chip to the right job, despite engineering overhead.

Get the full analysis with uListen

Mission-first hiring and transparent communication can scale a 2,000-person lab with low politics.

Anthropic’s founding group and first ~100 employees joined largely for existential safety motives, not prestige, which Brown credits with preserving a mission-oriented culture and making it easier to call out misaligned behavior.

Get the full analysis with uListen

Not optimizing for public benchmarks can produce better real-world performance.

Anthropic avoids teams dedicated to ‘gaming’ published benchmarks, focusing instead on internal evals and dogfooding (especially for code) to improve practical usefulness—explaining why founders often see outsized coding gains compared to benchmark deltas.

Get the full analysis with uListen

Treating the model itself as a ‘user’ unlocks better agentic tools like Claude Code.

Claude Code emerged from an internal engineer building tools that give Claude the right context and capabilities to act effectively, reframing product design around empowering the model-as-agent rather than only the human developer.

Get the full analysis with uListen

AI is driving an unprecedented global compute and power infrastructure boom.

Spending on AGI compute is growing roughly 3x per year, already rivaling or exceeding historical mega-projects like Apollo and Manhattan, with power (especially in the U. ...

Get the full analysis with uListen

Notable Quotes

“Big tech just teaches you to work at a big tech company, whereas it’s much more fun to be a wolf.”

— Tom Brown

“Seeing that line of reliably you get more intelligence if you spend more compute with the right recipe was the main thing that was like, this is happening now.”

— Tom Brown

“We don’t teach to the test, because if you start doing that, then it has weird bad incentives.”

— Tom Brown

“One thing that’s interesting to look at is just that humanity is on track for the largest infrastructure build-out of all time.”

— Tom Brown

“Taking more risks is wise… work on stuff where an idealized version of yourself would be really proud of you if you succeeded.”

— Tom Brown

Questions Answered in This Episode

How might Anthropic’s choice to avoid ‘teaching to the test’ on public benchmarks influence long-term safety and competitiveness compared to labs that aggressively optimize those scores?

Tom Brown, Anthropic co-founder and former OpenAI/GPT-3 engineer, traces his path from early YC startups through OpenAI and Google Brain to co-founding Anthropic with a mission-focused team. ...

Get the full analysis with uListen AI

What new product categories become possible if you fully design around ‘model-as-user’ rather than human-as-user, and which industries are likeliest to be transformed first?

The conversation dives into Anthropic’s early days, the uncertainty around products, and how Claude 3. ...

Get the full analysis with uListen AI

Given the projected 3x-per-year growth in AGI compute, how should policymakers and utilities prepare for the power and permitting demands of AI data centers?

He also discusses competitive dynamics with startups, the importance of mission-aligned culture, and the massive constraints emerging around power, hardware, and data centers.

Get the full analysis with uListen AI

Where is the practical limit of scaling laws: do we expect them to hold indefinitely, or are there foreseeable physical, economic, or algorithmic ceilings?

For younger engineers, Brown emphasizes taking more risks, optimizing for work that an idealized version of themselves would be proud of, and not over-indexing on traditional credentials.

Get the full analysis with uListen AI

For young engineers today, what concrete signals distinguish a genuinely mission-driven AI lab or startup from one that is primarily prestige- or hype-driven?

Get the full analysis with uListen AI

Transcript Preview

When we started out, we didn't seem like we were gonna be successful at all. (laughs) OpenAI had a billion dollars and like all of these, all of this star power, and we had seven co-founders (laughs) in COVID, like, trying to build something, and we didn't know if we were necessarily going to make a product or what the products would look like. One thing that's interesting to look at is just that humanity is on track for like the largest infrastructure build-out of all time.

Tell us about the early days of Anthropic. So, you had a general idea of this sort of like long-term mission that you wanted to do to, you know, not destroy humanity. But like, what did you actually work on for the first year? How did that converge on an actual product?

Welcome back to another episode of The Light Cone. Today, we've got a real treat, co-founder of Anthropic, Tom Brown.

Excited to be here.

So, Tom, one of the things that a lot of the people watching, uh, would love to figure out is, you got started in tech at the age of 21, fresh from MIT. How does someone go from that in 2009 to literally co-founding something as important as Anthropic?

Summer 2009, Linked Language, two of my friends had started that out. I think they had seen one of our other friends, Kyle Vogt, kind of do a YC company, and so it was in the water that that's a thing that we could try to do. They started out, I was the first employee. Back then, yeah, you guys let me join for all the dinners and stuff like that too. I could have instead gone to like a big tech company or something like that, and I think probably just as a software engineer, I might have learned more software engineering skills. But I think by being there with the other co-founders, without anyone telling us what to do. (laughs)

(laughs)

Basically, we like, we had to figure out how to live, how to like... The company would die by default. I- I think in school, there was a lot of like a feeling of more of, people would give me tasks and I would do the tasks. It was kind of like a dog waiting for like food to be-

(laughs)

... like, fed to them in their bowl or something like that. And I think for that company, it was more like wolves, and we have to like hunt our for like food, otherwise like we're, our kids are gonna starve or something like that. I think that that mindset, I think, has been like the most valuable mindset, that shift that I've had for trying to do like bigger, more exciting things.

Yeah, big tech just teaches you to work at a big tech company, whereas, uh, it's much more fun to be a wolf.

Yeah. (laughs)

How did you go from like, so working at friend's startup to then you started your own one?

Install uListen to search the full transcript and get AI-powered insights

Get Full TranscriptGet more from every podcast

AI summaries, searchable transcripts, and fact-checking. Free forever.

Add to Chrome