Jensen Huang – TPU competition, why we should sell chips to China, & Nvidia’s supply chain moat

Dwarkesh Patel (host), Jensen Huang (guest)

In this episode of Dwarkesh Podcast, featuring Dwarkesh Patel and Jensen Huang, Jensen Huang – TPU competition, why we should sell chips to China, & Nvidia’s supply chain moat explores jensen Huang on Nvidia’s moats, TPUs, clouds, and China policy Huang argues Nvidia’s core value is “electrons to tokens” conversion, where deep co-design across chips, systems, networking, and software makes commoditization unlikely.

Jensen Huang on Nvidia’s moats, TPUs, clouds, and China policy

Huang argues Nvidia’s core value is “electrons to tokens” conversion, where deep co-design across chips, systems, networking, and software makes commoditization unlikely.

He frames Nvidia’s supply-chain moat as a function of credible downstream demand that convinces upstream partners (foundries, packaging, memory, photonics) to invest and scale bottlenecks within ~2–3 years.

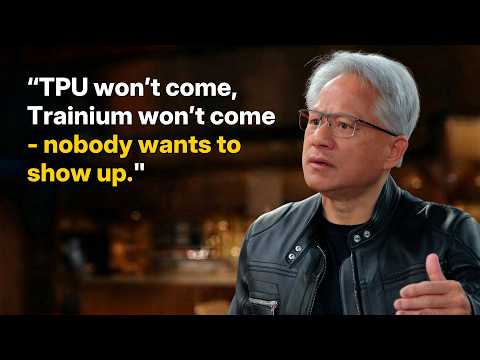

On TPUs/ASICs, he claims GPUs win because AI progress depends on programmability and frequent algorithmic shifts (not just matrix multiply), plus Nvidia’s install base and engineering support for customers’ stacks.

He explains why Nvidia avoids becoming a hyperscaler: clouds already exist, while Nvidia focuses on the “hard part” (platform + ecosystem) and selectively invests to ensure partners and AI labs can scale.

In a long debate on China export controls, Huang contends China has ample energy, talent, and chip capacity to progress regardless, and that conceding the China market risks splitting ecosystems and weakening the American tech stack long-term.

Key Takeaways

Nvidia’s moat is full-stack co-design, not just chip specs.

Huang repeatedly emphasizes that big gains (e. ...

Get the full analysis with uListen

Supply-chain leverage comes from credible demand and ecosystem coordination.

He claims upstream partners invest because Nvidia can reliably absorb supply and sell through massive downstream demand; events like GTC help align upstream and downstream players around the size and timing of what’s coming.

Get the full analysis with uListen

Most bottlenecks are solvable quickly if the demand signal is clear; energy is slower.

Huang argues packaging, fabs, and even EUV capacity can ramp in a few years once planning is aligned, while grid/energy policy and physical buildout of power infrastructure are the longer-duration constraints.

Get the full analysis with uListen

Programmability matters because AI algorithms change faster than hardware cycles.

He argues a TPU-like systolic array can be great for today’s kernels, but rapid leaps require trying new attention mechanisms, hybrid architectures, disaggregation, and new kernels—work that benefits from a general programmable platform (CUDA).

Get the full analysis with uListen

CUDA’s defensibility is install base plus Nvidia’s hands-on performance engineering.

Even if hyperscalers can write kernels, Huang says Nvidia’s own engineers (and AI-assisted optimization) can often unlock 1. ...

Get the full analysis with uListen

Nvidia avoids hyperscaling to prevent ecosystem conflict and focus on what only Nvidia will do.

He frames cloud as a business others will fill, whereas CUDA, domain libraries, and accelerated computing platforms required decades of risk-taking; Nvidia instead supports “neo clouds” via selective investment without becoming a financier/operator itself.

Get the full analysis with uListen

On China, Huang’s strategic fear is ecosystem bifurcation more than short-term chip denial.

He argues China will reach capability thresholds anyway via energy, talent, and domestic scaling; the bigger risk is pushing developers and open models onto a non-American stack, creating long-run standards and optimization gravity away from Nvidia/US tech.

Get the full analysis with uListen

Notable Quotes

“The input is electron, the output is tokens. That is, in the middle, Nvidia.”

— Jensen Huang

“We should do as much as needed, as little as possible.”

— Jensen Huang

“None of the bottlenecks last longer than a couple, two, three years. None of them.”

— Jensen Huang

“Our GPUs… are kind of like F1 racers.”

— Jensen Huang

“Comparing AI to anything that you just mentioned is lunacy.”

— Jensen Huang

Questions Answered in This Episode

What specific internal metrics does Nvidia use to decide a supply-chain bottleneck is “two to three years” from resolution (e.g., CoWoS, HBM, silicon photonics)?

Huang argues Nvidia’s core value is “electrons to tokens” conversion, where deep co-design across chips, systems, networking, and software makes commoditization unlikely.

Get the full analysis with uListen AI

If hyperscalers increasingly rely on Triton/custom stacks, what parts of CUDA become less important—and which parts become more important—to Nvidia’s moat?

He frames Nvidia’s supply-chain moat as a function of credible downstream demand that convinces upstream partners (foundries, packaging, memory, photonics) to invest and scale bottlenecks within ~2–3 years.

Get the full analysis with uListen AI

Huang claims Nvidia has best inference TCO and invites others to show results; what benchmark conditions (batching, latency targets, context length, networking) most change the TPU/Trainium vs GPU comparison?

On TPUs/ASICs, he claims GPUs win because AI progress depends on programmability and frequent algorithmic shifts (not just matrix multiply), plus Nvidia’s install base and engineering support for customers’ stacks.

Get the full analysis with uListen AI

If energy is the real long-term constraint, what concrete policy or infrastructure actions would most increase US “tokens per watt” capacity over the next decade?

He explains why Nvidia avoids becoming a hyperscaler: clouds already exist, while Nvidia focuses on the “hard part” (platform + ecosystem) and selectively invests to ensure partners and AI labs can scale.

Get the full analysis with uListen AI

Huang argues China can compensate for weaker chips with more energy and more chips; where are the real hard limits—HBM bandwidth, interconnect, software maturity, or something else?

In a long debate on China export controls, Huang contends China has ample energy, talent, and chip capacity to progress regardless, and that conceding the China market risks splitting ecosystems and weakening the American tech stack long-term.

Get the full analysis with uListen AI

Transcript Preview

We've seen the valuations of a bunch of software companies crash because people are expecting AI to commoditize software. And there's a, a potentially naive way of thinking about things, which is like, look, Nvidia sends a GDS2 file to TSMC. TSMC builds the logic dies, it builds the switches, um, then it packages them with the HBM that SK Hynix and Micron and Samsung make. Then it sends it to an ODM in Taiwan where they assemble the racks. And so Nvidia is fundamentally making software that other people are manufacturing, and if software gets commoditized, does Nvidia get commoditized?

Well, in the end, something has to transform electrons to tokens. That transformation, um, there's no-- The transformation of electrons to tokens, uh, and making those tokens more valuable over time, uh, I, I don't-- I think that, that, that's hard to, hard to, um, completely commoditize. The, the transformation from electrons to tokens is such an, such an incredible journey and, and making that token, you know, it's like making a one molecule more valuable than another molecule, making one token more valuable than another. The amount of artistry, engineering, science, invention that goes into making that token valuable, uh, obviously we're, we're watching it happening in real time. And so, so the, the, the, the transformation, the manufacturing, um, all of the science that goes in there i- is far from un- deeply understood-

Mm.

... and is far from-- the journey is fro- from, far from over. And so, so I, I doubt that it will happen. Um, we're gonna make it more efficient, of course. I mean, the whole, the whole thing about Nvidia, i-in fact, the way that you framed the question is, is my mental model of our company. The input is electron, the output is tokens. That is, in the middle, Nvidia, and our job is to, to do as much as necessary, as little as possible to enable that transformation to be done at incredible capabilities. And, and what I mean by as little as possible, whatever I don't need to do, I partner with somebody and I make it part of my ecosystem to do. And if you look at Nvidia today, we probably have the largest ecosystem of partners, both in supply chain upstream, supply chain downstream, all of the computers, computer companies, and all the application developers and all the model makers and all the... You know, AI is a five-year, five-layer cake, if you will, and, and we have ecosystems across the entire five layers. And, and so we try to do as little as possible, but the part that we have to do, as it turns out, is insanely hard.

Mm.

And, and, um, I, I don't think that that gets commoditized. In fact, in fact, um, uh, I also don't think that the, the enterprise software companies, uh, the tools makers... You know, most of the software companies today are tools makers. Um, some of them are not. Um, but are, are-- Some of them are workflow, um, codification, you know, systems. Um, but for a lot of companies, they're tool makers. For example, you know, Excel is a tool, PowerPoint's a tool, uh, Cadence makes tools, Synopsys makes tools. I, I actually see the opposite of what people see. I think the number of agents are going to grow exponentially. The number of tool users are gonna grow exponentially, and it's very likely that the number of instances of all these tools are gonna skyrocket. It is very likely the number of instances of Synopsys Design Compiler is gonna skyrocket, and the number of, number of agents that are gonna be using the floor planners and all of our layout tools and our design, d-design rule checkers, the number of agents that are-- Today we're limited by the number of engineers. Tomorrow, those engineers are gonna be supported by a bunch of agents, and we're gonna be exploring out the design space like you've never seen explored before, and we're gonna use the tools that we use today. And so, so I think, I think tool use is gonna cause, cause the software companies to skyrocket. The reason why it hasn't happened yet is because the agents aren't good enough at using their tools yet. And so either these companies are gonna build the agents themselves, or agents are gonna get good enough to be able to use those tools, and I think it's gonna be a combination of both.

Install uListen to search the full transcript and get AI-powered insights

Get Full TranscriptGet more from every podcast

AI summaries, searchable transcripts, and fact-checking. Free forever.

Add to Chrome